Reasoning modes

“Jagged intelligence” is a term coined by AI researcher Andrej Karpathy

In this research, we offer a partial explanation for this phenomenon: these models might not alway use, or we might not know how to activate, their full potential.

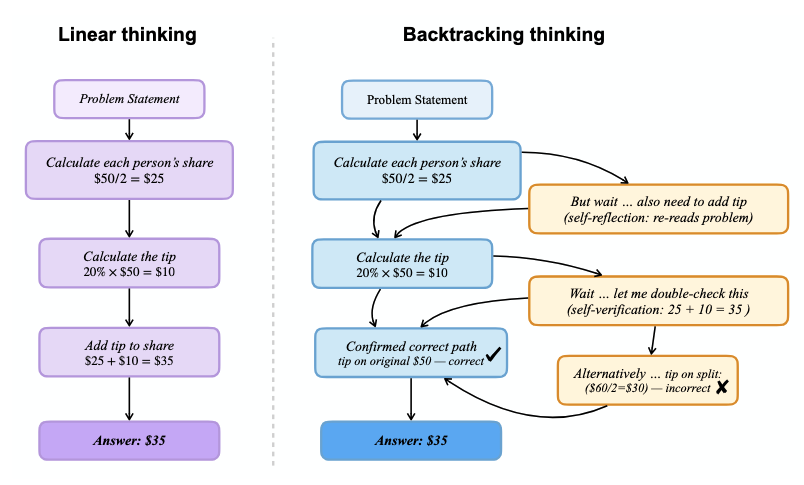

When reasoning models perform a certain reasoning behavior (e.g. backtracking or verification), why do they chose to generate that step?

In this part of the series, we’ll gain insights to this question from post-training perspective, and more specifically, the post-training data.

During post-training, models are optimized via reinforcement learning or distillation to exhibit reasoning, there is a distinct risk that the optimization merely encourages the model to adopt the syntax of reasoning bahaviors without the substance.

Let’s start with a “simple” knowledge question:

It’s likely that most large reasoning models have been trained on these piece of knowledge and they can answer directly without thinking. However, when we let the models think, they still express uncertanty, as shown in the following reasoning trace.

Okay, so I need to figure out the capital of Vietnam. I’m not entirely sure, but I think it’s somewhere in Southeast Asia. I remember hearing that Vietnam has a capital, but I’m not 100% certain which city it is. Let me try to recall any information I might have.

…

I think the capital isn’t one of the really big cities like Ho Chi Minh or Hanoi. Wait, no, actually, I think Hanoi is the capital. I’ve heard of Hanoi being mentioned in the news a lot, especially regarding political events or historical contexts. It’s been a while since I studied this, so I’m a bit fuzzy on the details.

…

Why does the model expresses uncertainty in this case?

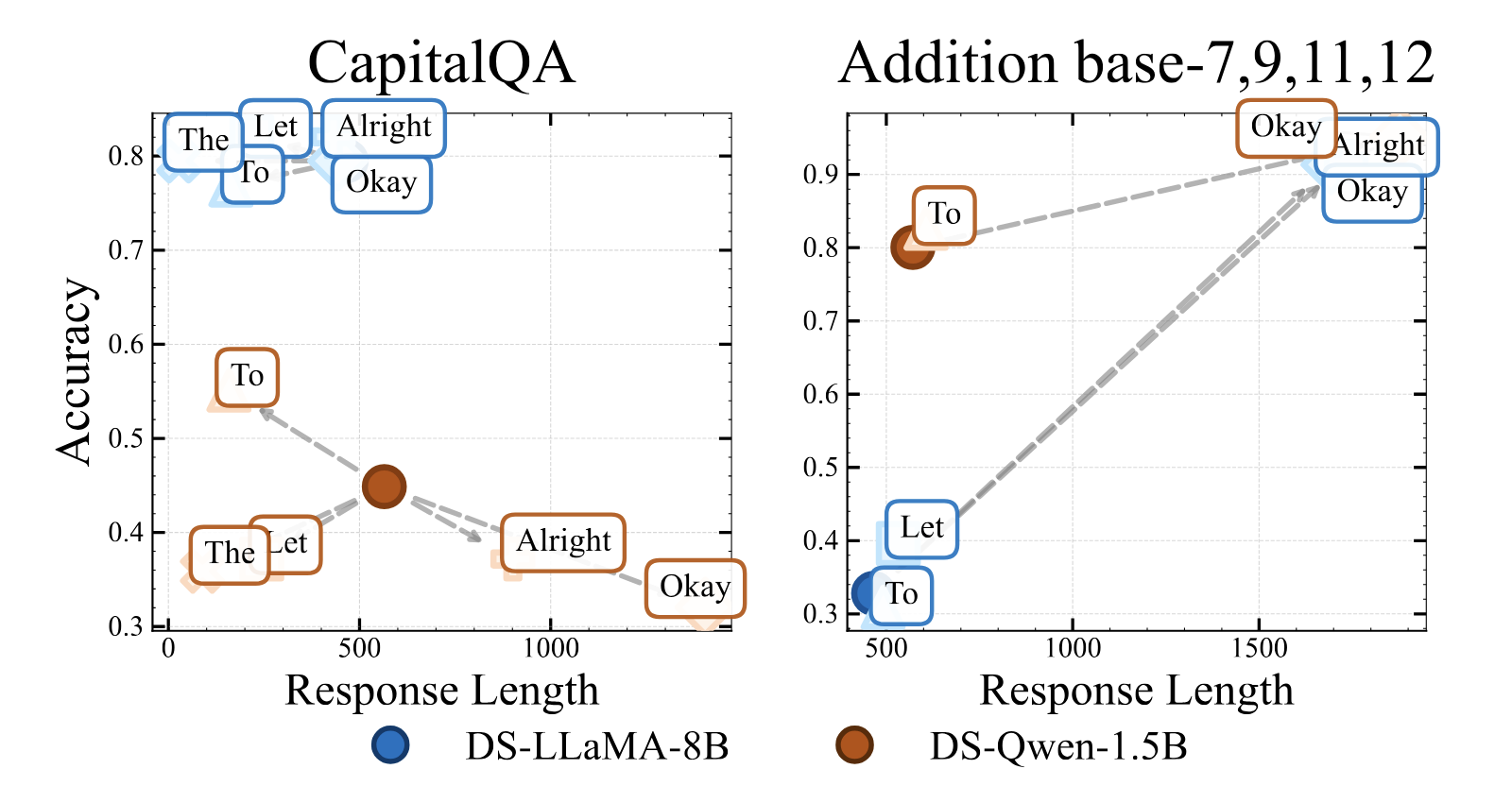

It turns out that changing a single prefix of the response could heavily affect the thinking pattern of models, as shown in the following examples.

Question

The Prefix Effect: A single spurious starting token can heavily affect model reasoning bahaviors.

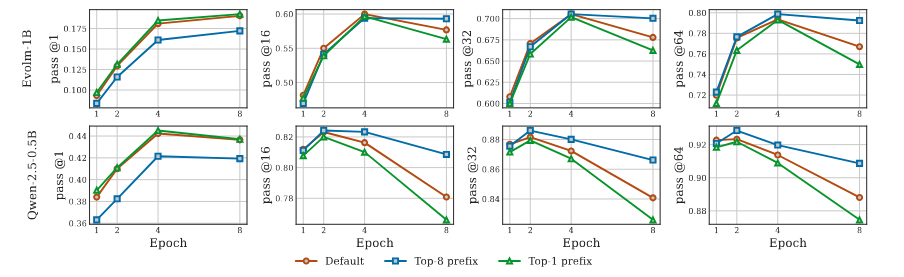

As a result, when we perturb a single prefix token of thinking trace, the performance and response length vary significantly.

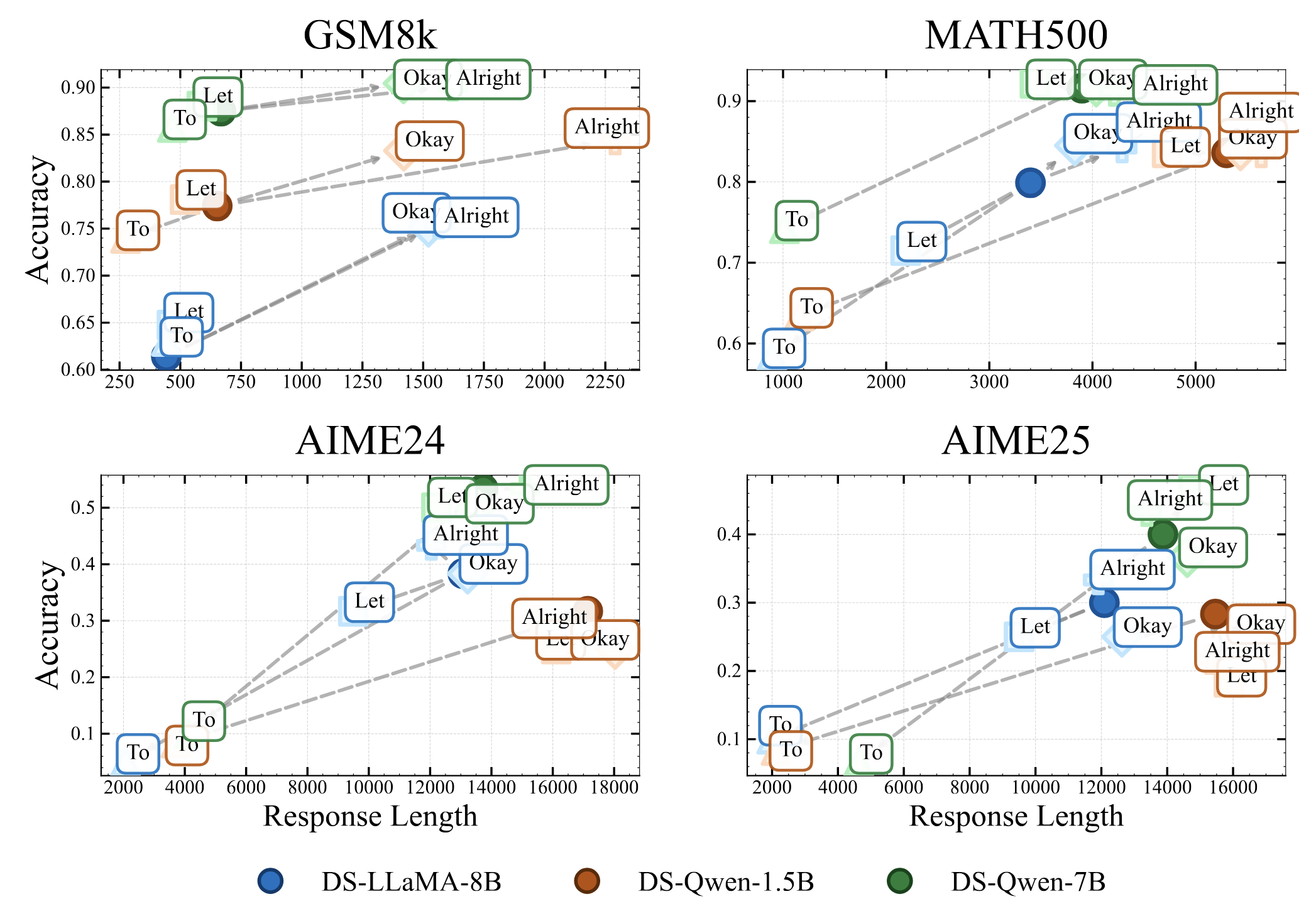

This finding also holds when we evaluate on math benchmarks such as GSM8k, MATH-500, AIME24, and AIME25.

Forks in the roads

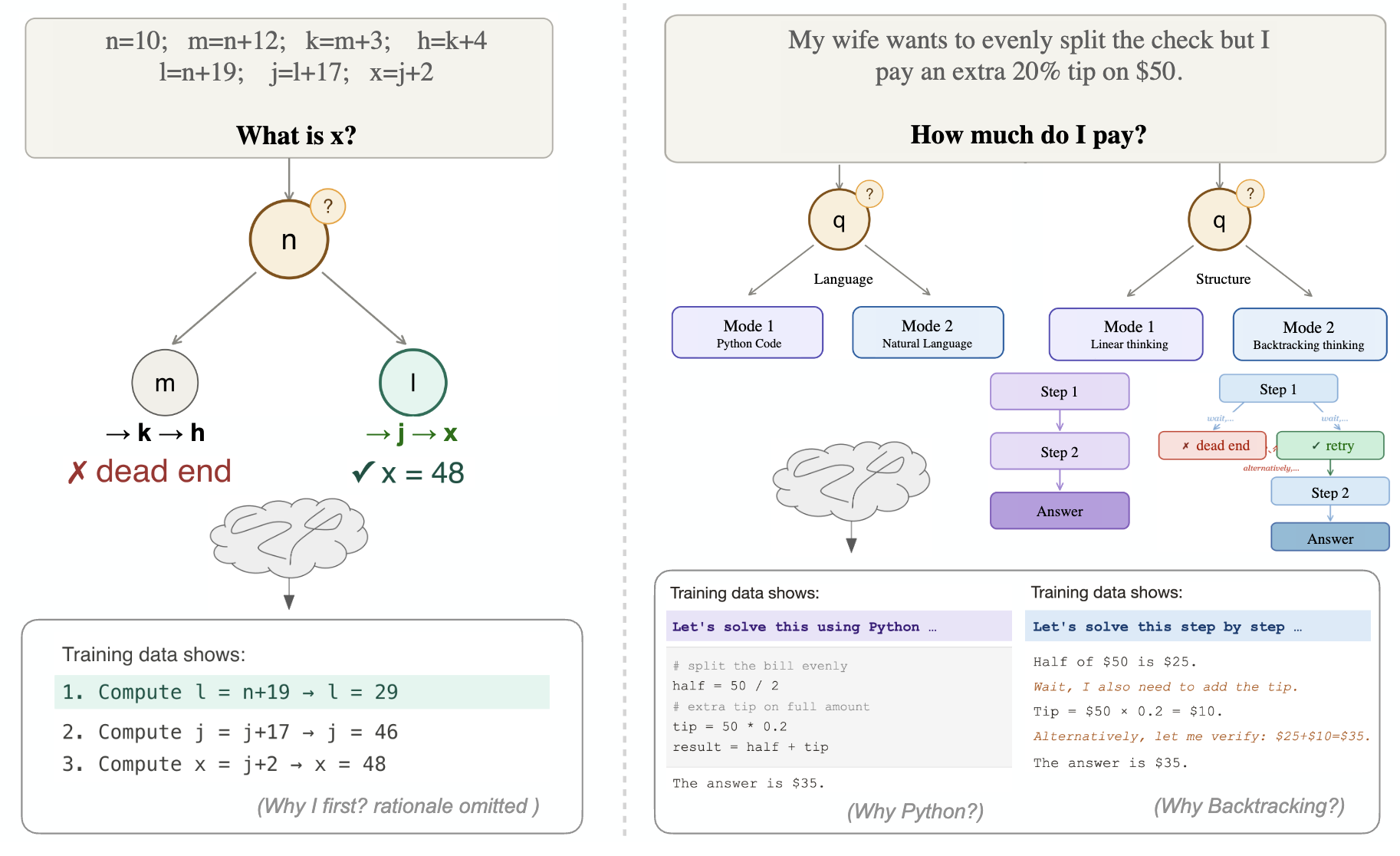

In our recent work, we investigate why distilled models exhibit such brittleness. Our key hypothesis is that linear and non-linear thinking represent distinct reasoning modes that co-exist in the training data, (for example, a mixture of outputs from models like DeepSeek-V3 and DeepSeek-R1

Forks in the roads and Coverage Shrinkage

To test this hypothesis, we designed controlled case studies that isolate and expose these decision-point structures. Our first setting is a graph-based navigation task, inspired by prior work on indecipherable nodes in next-token prediction

In this task, a model must traverse a star graph from a start node to a target node, while encountering branching points that provide no information about which branch leads to success.

Q: Let each letter represent a numerical variable. These variables are defined as follows:

n = 10; m = n + 12; k = m + 3; h = k + 4; l = n + 19; j = l + 17; x = j + 2.

What is the resulting value of x?

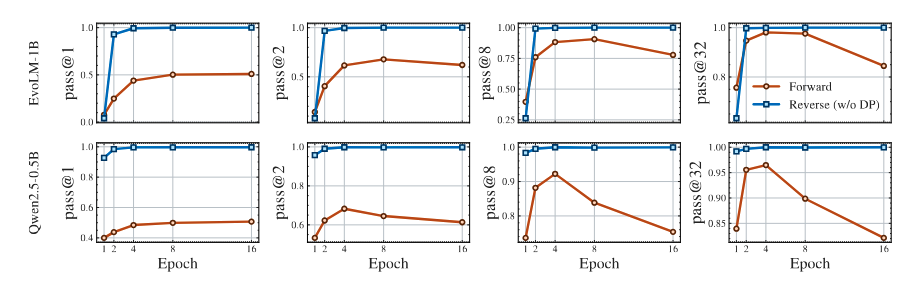

We construct two controlled data variants that differ only in whether such decision points are present during post-training. In the Forward setting, the model encounters explicit decision points—states with multiple possible continuations where only one leads to a correct solution. Solving the problem therefore requires the model to decide and select among reasoning paths.

Forward Solution

To find the value of x, we compute the variables step by step:

- n = 10

- l = n + 19 = 10 + 19 = 29 ⚠ Decision point: At this step, the solution path can branch depending on which variable is computed.

- j = l + 17 = 29 + 17 = 46

- x = j + 2 = 46 + 2 = 48

Thus, the final answer is x = 48.

In contrast, the Reverse (w/o decision point) setting removes these forks by presenting the correct trajectory in reverse format (target to source), effectively eliminating any need for path selection by the model.

Reverse Solution

A: To solve the problem, we compute the variables step by step.

- Substitute j = l + 17 into x, which gives

x = (l + 17) + 2 = l + 19. - Substitute l = n + 19 into x, which gives

x = (n + 19) + 19 = n + 38. - Compute x using n = 10, which gives

x = 10 + 38 = 48.

Thus, the final answer is x = 48.

Because both settings correspond to the same underlying task, any behavioral differences can be directly attributed to the impact of decision points in the data.

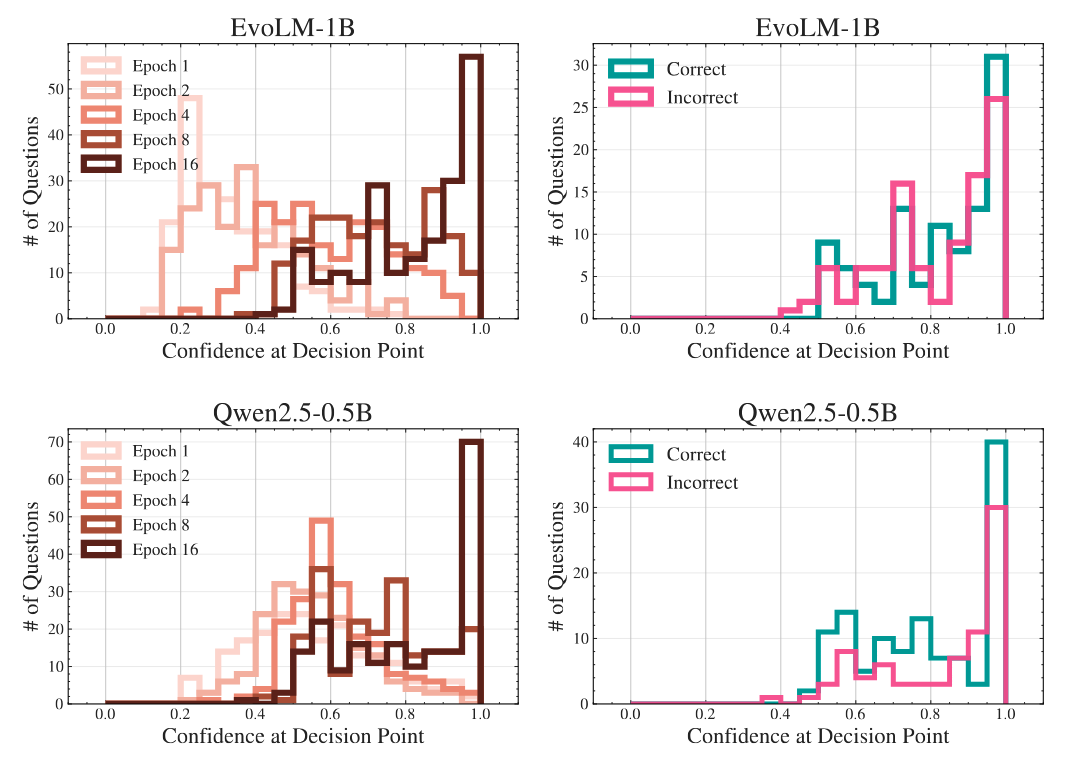

The above figure shows that model’s confidence at decision points increases sharply throughout the training. However, this increase is not selective: the model is highly confident not only when it chooses the correct branch, but also when it chooses an incorrect one. This shows that training with decision points in data can push the model toward overconfident, single-path commitments, rather than calibrated uncertainty over multiple valid continuations. As a result, alternative trajectories are progressively suppressed, leading to the observed coverage shrinkage and drop in pass@k.

We further observe the same coverage shrinkage emerges during RLVR when training on Forward setting but not in Reverse setting. This suggests that coverage shrinkage is driven not only by the learning algorithm, but also by the data and the presence of decision points in reasoning.

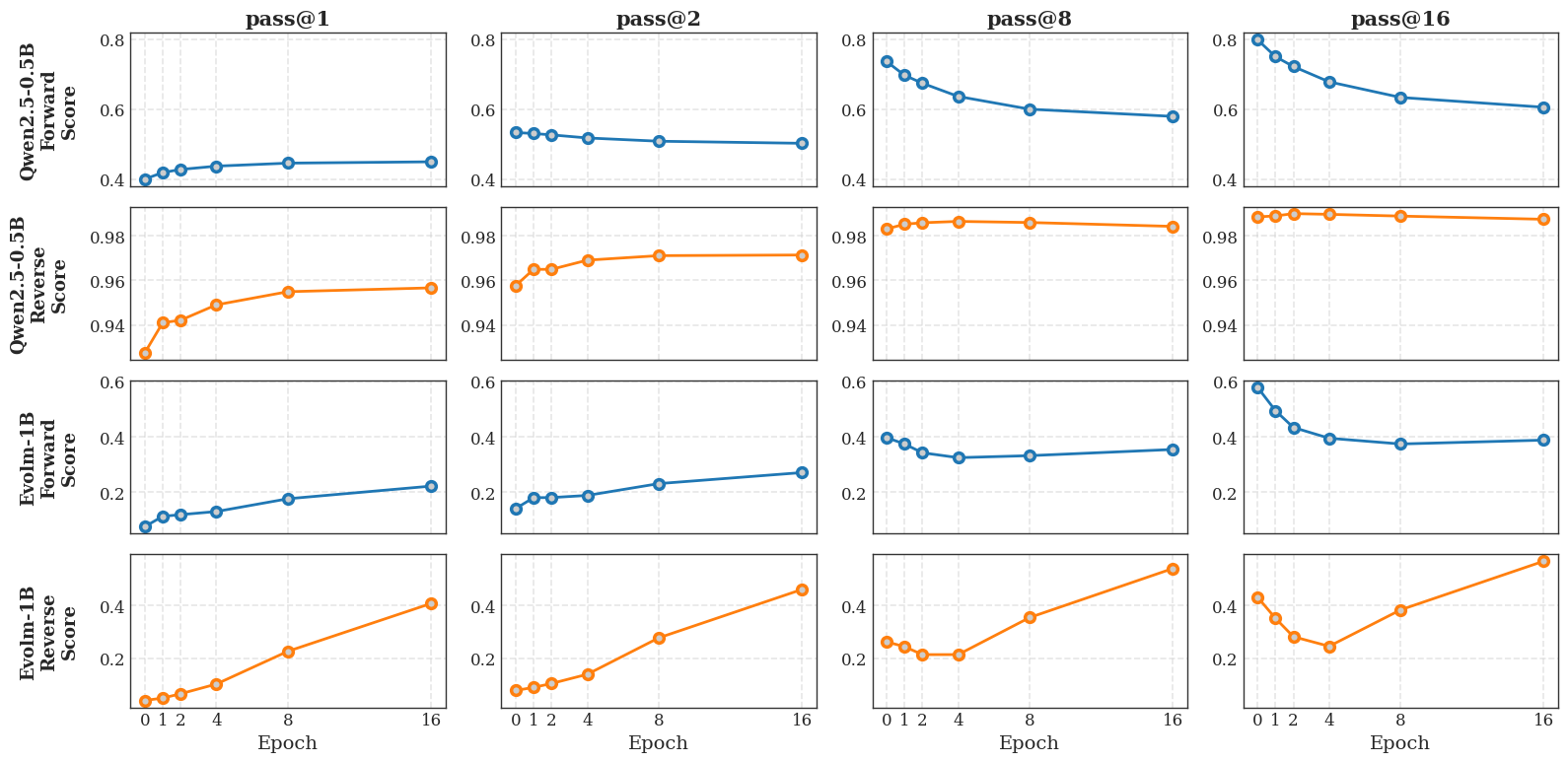

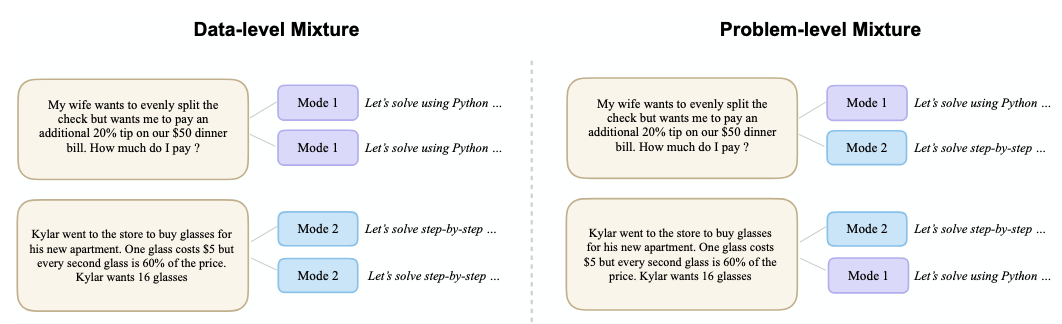

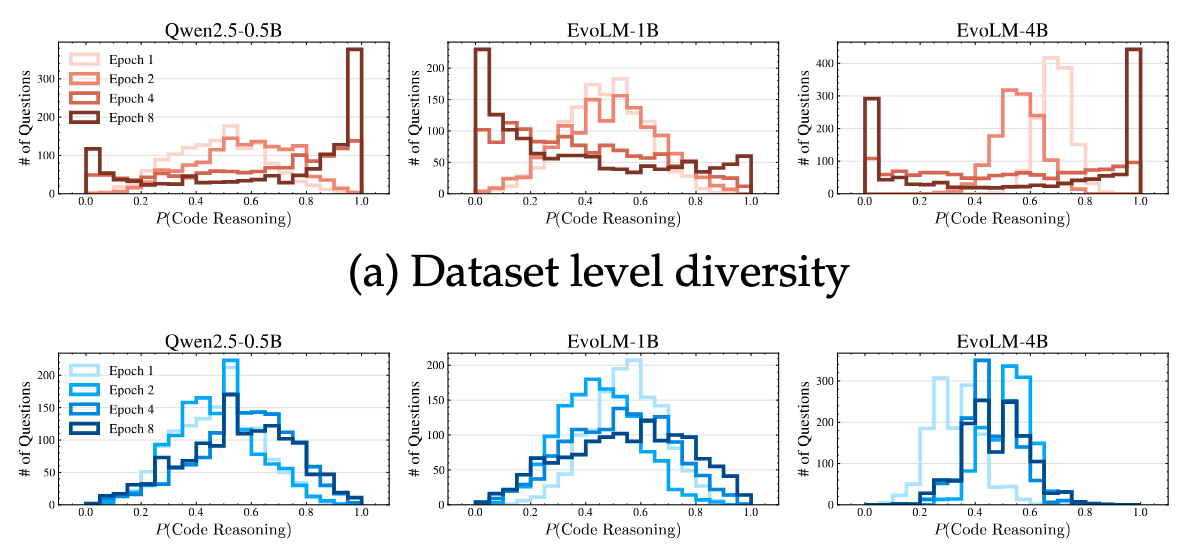

Next, we investigate whether models trained on mixed data can learn to balance different reasoning modes under repeated sampling. A key question is how the structure of diversity in training data affects this decision. In our experiments, we construct two data designs with identical diversity ratios (50% natural language (NL), 50% code) but different organization (the above Figure): Data-level diversity: each problem is solved using a single mode, but the dataset is globally balanced across the modes; Problem-level diversity: each problem appears with both reasoning modes. This setup isolates whether coverage depends not just on how much diversity is present, but how it is distributed.

If first tokens act as decision points, can we use them to recover lost coverage? In this experiment, we enforce perturbation in the sampling of first token among top-k options instead of the standard decoding (without the need for retraining!). We observe that it can effectively nudge the model into different reasoning paths, and significantly restore their lost coverage.

Conclusion

These results point toward a new perspective: improving reasoning in LLMs may require not only scaling compute or refining objectives, but also explicitly modeling and preserving the structure of reasoning paths during data curation and training. In particular, this perspective helps explain why developing a single model that robustly operates across both instruct (non-thinking) and reasoning (thinking) modes remains challenging

Enjoy Reading This Article?

Here are some more articles you might like to read next: