Scaling compute

More parameters and more tokens

Bender et al.

According to the GPT-3 paper

As mentioned in a talk by Hyung Won Chung from OpenAI

“Somehow the model learns to perform many, many tasks only trained with next-token prediction.”

In the same talk, he proposed the massive multitask learning hypothesis:

“Beyond some scale, the easiest way to do well on next-token prediction is for the model to find a set of general skills that are applicable to many tasks. For example, these skills include learning languages, understanding, and reasoning.”

Recently, there is a new axis of scaling these models: test-time compute. In this scaling regime, pass@1 performance on many tasks gets much better as we add more test-time compute.

There are a lot of examples where increasing test-time compute (i.e., doing more work at inference/test time rather than training time) leads to better performance in traditional algorithms - long before LLMs.

- Best-First or A Search*: Expanding more nodes → closer to optimal path.

- Monte Carlo Tree Search (MCTS): More playouts → deeper/denser search tree → stronger move choices.

- Beam Search in Decoders (e.g., HMMs, CRFs, SMT): increase beam width → examine more candidate sequences.

- k-Nearest Neighbors (k-NN): Higher k often reduces noise → better predictive performance (up to a point).

- Markov Chain Monte Carlo (MCMC): More MCMC samples → better posterior estimates.

In LLM inference, test-time compute often refers to the number of tokens generated (e.g., generating multiple responses, or producing longer responses, etc.). Scaling test-time compute can be achieved via

- Chain-of-thought (CoT)

- Self-consistency: sample many CoT paths

- Tree-of-thoughts search

- Reflection loops / Re-evaluation or verification passes

- Reasoning models with thinking tokens

However, different from previous examples, why does this help is often not very clear.

Reasoning in text

“The process of drawing conclusions based on available information (usually a set of premises).”

In this article, we refer to “reasoning” in LLMs as the act of generating intermediate steps

- Chain-of-thought with Prompting: Prompt LMs such that they generate intermediate tokens/steps before the final answer.

- Chain-of-thought without Prompting: Select a decoding path where the model “reason” before answering.

- Reasoning via RLVF or Distillation: Post-training LMs to reinforce reasoning behaviors such as backtracking, verification, error correction, …

The first and second approaches try to steer the generation using input context or intial response tokens. And they suggest that the base/instruct models are capable of reasoning step-by-step using text to some extend.

Furthermore, LLMs could be finetuned such that they generate reasoning behaviors when solving problems. These reasoning behaviors could be initialization, deduction, knowledge augmentation, example testing, uncertainty estimation, and backtracking, e.t.c

1. From direct answers to chain-of-thought answers

The following is an example from

| Standard Prompting | Chain-of-Thought Prompting | |

|---|---|---|

| Model Input | Q: Roger has 5 tennis balls. He buys 2 more cans of tennis balls. Each can has 3 tennis balls. How many tennis balls does he have now? A: The answer is 11. Q: The cafeteria had 23 apples. If they used 20 to make lunch and bought 6 more, how many apples do they have? | Q: Roger has 5 tennis balls. He buys 2 more cans of tennis balls. Each can has 3 tennis balls. How many tennis balls does he have now? A: Roger started with 5 balls. 2 cans of 3 tennis balls each is 6 tennis balls. 5 + 6 = 11. The answer is 11. Q: The cafeteria had 23 apples. If they used 20 to make lunch and bought 6 more, how many apples do they have? |

| Model Output | A: The answer is 27. ✕ | A: The cafeteria had 23 apples originally. They used 20 to make lunch. So they had 23 - 20 = 3. They bought 6 more apples, so they have 3 + 6 = 9. The answer is 9. ✓ |

This example uses 1-shot prompt, which includes an example of CoT in the prompt as demonstration. But in practice, including a simple instruction like “think step-by-step” has a similar effect.

There are several works that try to give a deeper understand of why does this improve the performance

Click here to know more

Hypothesis 1: Chain of thought is a better estimator for locality structure

Consider the following theoretical setting:

- (A sequence of lenght N) A set of random variables ${Y_i}_{i = 1}^N$ taking support on a finite set $\mathcal{X}$

- $p_d$ is the data distribution defined by a Bayes net.

- Training data is a sequence of variable indices $i \in { 1,\dots,N }$, and variable values $v_i \in \mathcal{X}$ in the format

<indice>:<value>. - Observation distribution $p_\text{obs}$ takes support on a set $\mathcal{Y}_\text{obs} \subseteq \mathcal{P}({1,\dots,N})$.

- Given an autoregressive conditional probability estimation model $q$, We can have the following estimators:

- Direct prediction: $\hat{q}_D(Y_i = y_i | Y_j = y_j) = q(Y_i = y_i | Y_j = y_j)$

- Scaffolded generation: \(\hat{q}_S(Y_i = y_i \| Y_j = y_j) = \frac{1}{M} \sum_{k=1}^{M} q(Y_i = y_i \| \{Y_s = y_s^{k}\}_{s \in S} ,Y_j = y_j)\) where \(y_s^{k} \sim q(Y_s\| \{Y_t = y_t^{k}\}_{t \in S\|t \prec s} ,Y_j = y_j)\)

- Free generation

Theorem 3.1.

Let $S$ be the space of possible sequences consisting of variable indices followed by variable values. Let $u$ be the uniform distribution over $S$. Let $H(p, q)$ denote the cross entropy between distributions $p$ and $q$. We consider the following risk:

Let $q^{*} = \arg\min_{q} R(q)$ be a minimizer of the risk over all possible probability distributions. Then, for all non-adjacent random variables $Y_i$ and $Y_j$, reasoning through intermediate variables has lower bias than direct prediction. That is, for any $y_i, y_j \in \mathcal{X}$:

\[\begin{aligned} \left| \mathbb{E}_{S \sim q^{*}}\!\left[ \hat{q}_S(Y_i = y_i \mid Y_j = y_j) \right] - p_d(Y_i = y_i \mid Y_j = y_j) \right|^2 &< \\ \left| \hat{q}_D(Y_i = y_i \mid Y_j = y_j) - p_d(Y_i = y_i \mid Y_j = y_j) \right|^2. \end{aligned}\]Hypothesis 2: Chain of thought is easier to learn for autoregressive language models.

Some tasks are easier to learn and generalize than the others.

In the example below, we present a failure case of CoT when prompting ChatGPT on a simple chain-of-arithmetic task:

In the above example, we ask GPT-4 to answer the value of a variable given chain of equations. The final result is correct but is this it? The answer start in the wrong direction and then come back to the correct branch. So there are 2 redundant steps.

It seems like the model should have the ability to backtrack.

2. From “linear reasoning” to “non-linear reasoning”

| Chain-of-Thought Prompting | Large Reasoning Models (e.g., o1, R1) | |

|---|---|---|

| Model Input | Q: Roger has 5 tennis balls. He buys 2 more cans of tennis balls. Each can has 3 tennis balls. How many tennis balls does he have now? A: Roger started with 5 balls. 2 cans of 3 tennis balls each is 6 tennis balls. 5 + 6 = 11. The answer is 11. Q: The cafeteria had 23 apples. If they used 20 to make lunch and bought 6 more, how many apples do they have? | Q: The cafeteria had 23 apples. If they used 20 to make lunch and bought 6 more, how many apples do they have? (Input is simple; the complexity is handled by the model’s hidden chain of thought) |

| Model Output | A: The cafeteria had 23 apples originally. They used 20 to make lunch. So they had 23 - 20 = 3. They bought 6 more apples, so they have 3 + 6 = 9. The answer is 9. ✓ | A: ⓘ Thought Process (Internal) 1. Initial calc: 23 - 20 = 13… 2. Wait, checking arithmetic: 23 minus 10 is 13. 23 minus 20 is 3. 3. Correction: The remaining amount is 3, not 13. 4. Add purchase: 3 + 6 = 9. 5. Final Verify: Does the question imply the 6 were bought before lunch? No, usually sequential. Answer holds. The answer is 9. ✓ |

Even thought, with CoT prompting, LLMs will break down the problem into steps, it often reflects a final solution which means that the “thought” does not includes common behaviors in human’s reasoning process such as uncertainty expression, verification, or backtracking.

However, these behaviors can emerge through RLVR (Reinforcement Learning from Verifiable Reward) finetuning

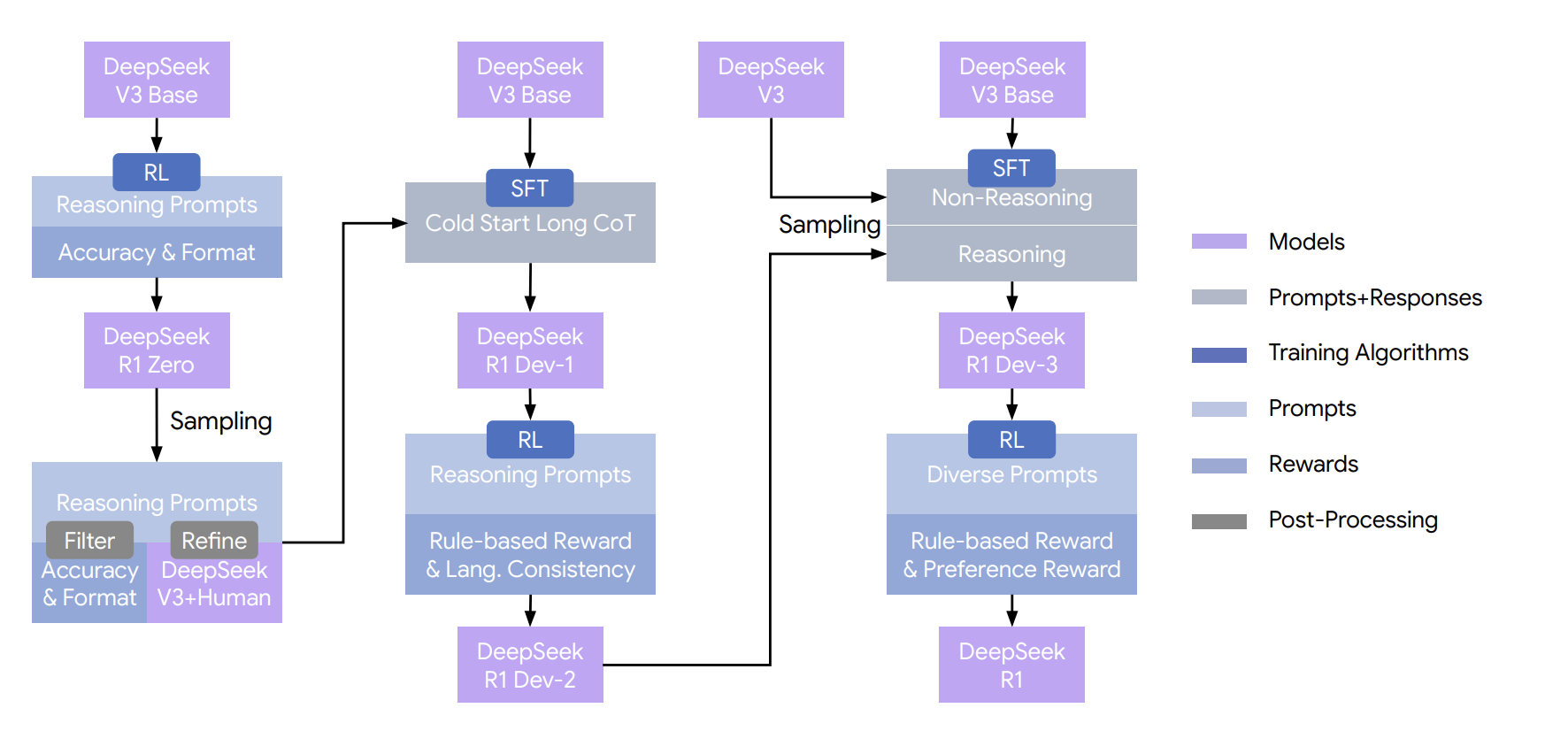

Another post-training receipt to make models think is reasoning distillation which is also describe in the DeepSeek-R1 paper and is shown to be more effective for small models. Furthermore, distilling stronger models into smaller ones achieves outstanding results. In contrast, training smaller models using the large-scale reinforcement learning approaches demands significant computational resources and may still not reach the effectiveness obtained through distillation

Thus, “effective” reasoning behaviors or “Aha moments” appear to emerge more readily in larger models. So, more paramerters and more tokens.

Enjoy Reading This Article?

Here are some more articles you might like to read next: